At a seminar recently hosted by Swedish JIC KIAindex focus was on ad blockers impact as a source of revenue drain, but also creating polluted web metrics for both advertisers and web site owners. Even robots got mentioned! It is evident however that one more thing has yet to hit the radar...

One of the little known factors of polluted data is repeat page views which seem to elude many. Sure, everyone has scrambled to set auto refresh markers in the data in order to exclude these non-robotic, yet automated non-human repeat requests wind up in the web analytics data.

These can be called 1440's based on the simple fact that there are 1440 minutes per day, such repeat requests tend to follow fixed repeat patterns. Well, that is if the device used a) has time set properly, b) is on a decent connection, and c) detection evasion was ignored.

Typically the requests of this kind hit home pages as a fairly regular frequency based on the request intervals of every 5 | 10 | 15 minutes. Yes, these are not human requests despite being browser based.

Typically the requests of this kind hit home pages as a fairly regular frequency based on the request intervals of every 5 | 10 | 15 minutes. Yes, these are not human requests despite being browser based.

The intelligent variants of this don't just run 24x7, but rather only during work hours where someone actually might benefit from the data collection. These are however not very common...

The repeat requests can be done quite easily, just build a frame page with an auto refresh of every 60 seconds and iframe the page you want to fetch as a part of it. Sure, might look great in your lobby for visitors to glance at. But it is seriously messing with metrics of the web site owner.

The dangerous side of this is that anyone doing this might be breaking local laws, this since many websites have the content stored in databases, this kind of data fetching very probably results in parts of the fetched data being stored in another database which belongs to another entity. One such blatant occurrence happened a few years ago resulting in the most expensive link click in Swedish internet history (article in Swedish, so consider using Google translate if your Swedish skills are poor).

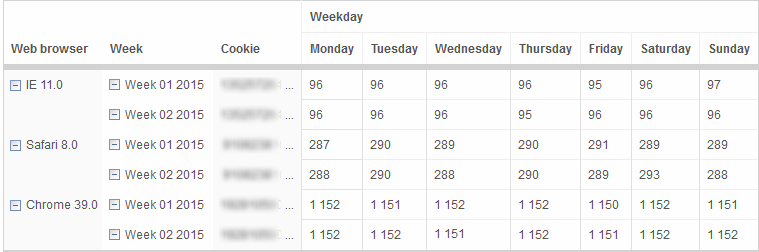

Detecting such 1440's is dead simple, filter on your home page and set up value max/min to see typical paced frequent page requests.

Detecting such 1440's is dead simple, filter on your home page and set up value max/min to see typical paced frequent page requests.

Make sure to track on unique cookie over time and check up on the source of the calls!

Do however note that the exception to easy detection are the 1440's that actively use multiple IPs, rotate their user agents and also block cookies, these ones are extremely difficult to detect as they use dodging techniques.

They can however be dealt with in the same way media houses do with ad blockers, it is a matter of priority and focus.

Having studied this kind of traffic for some time it is very evident what type of business is doing this kind to repeat content pulling, as example PR agencies and lobby firms benefit from this up to the minute monitoring. In order to track and effectively manage client communication, monitoring the media sites is part of the game to ensure swift damage control. Some entities sure seem to need it.

Media houses seem to track each other, one reason why articles seem to contain the same content but with words jumbled in an effort to make it look unique.

In order to ensure that the currency of JICs isn't diluted by 1440s as well, the exclusion of data generated by 1440 visitors that hit the homepage needs to be evaluated, not only removing auto refreshes is going to save the day. With these repeat page requests removed the massive near 24 hour visit durations of such visitors can also drop down to actual levels when web sites summarize this metric. Any decent web analytics tool should be capable to do this in the hands of a solid web analyst.

Given how the tune of how time spent in front of an ad is starting to strongly influence the cost of advertising, this isn't an option any longer. It is a matter of ensuring reliability in the metrics, else this programmatic assault on time spent, page view count and web site usage will make the web site analytics quite unreliable.

On the upside, any owner of a web site that is riddled with these 1440s requests knows their content is relevant and closely monitored. It just needs to be handled properly.